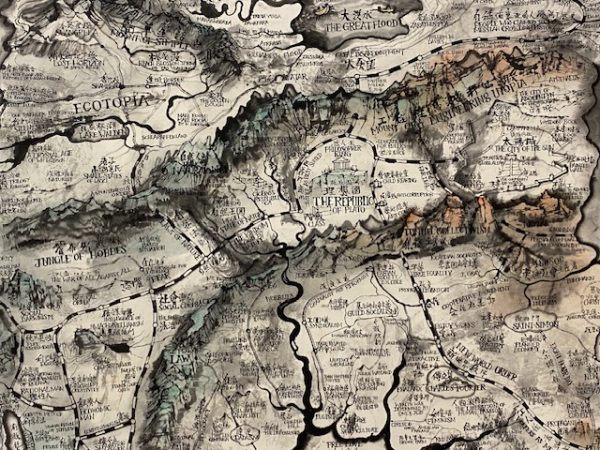

Most people enjoy the banks of the river “La Seine” in Paris. Ever since the Olympics in Paris 2024, the temptation to go swimming there or to do some other kind of water related activities, is high. Fewer people are aware of an other splendid location near the Gare de l’Est. The canal Saint Martin and the nearby end of the Canal de l’Ourcq ( image below) have much to offer for stressed people to take some time off and relax. From cinemas, cafés and bars you can choose your favorite to pass time with friends or family, alternatively just keep walking for miles to get your exercise as needed to sustain your body in good health and shape. The health impact of such cool and more humid areas has a high value to the benefit of all without forcing people to travel long distances for recreational activities or normalizing weight or heart rates.