The annual science fair at Hannover is a kind of a show of things to touch and of those things that come to the public market in the near future. Most of the annual hype is about potentials of production. Rationalization, using few resources or innovative solutions of digitization are high on the agenda. Create your digital twin, save energy, make production more safe or cyber secured.

Robotics is another reason to visit the fair. Some 7 years ago I had my Sputnik experience there. The robotics company KUKA had demonstrated live the that assembling a car from pre-manufactured components takes just 10 minutes for the robots. Shortly afterwards the whole company was bought by Chinese investors. Roughly 5 years later we are swamped by cars from China. It was not that difficult to predict this at that time. Okay, we need to focus on more value added production and take our workforces (not only) in Europe along on the way. Reclaiming well-paid, unionized jobs in manufacturing, as Joe Biden does, will not be an easy task. Robots and their programming is expensive, but skilled workers, too. Hence, the solution is likely to be robot-assisted manufacturing as a kind of hybrid solution for cost-effective production systems.

Following the proceedings of the 2024 fair we are astonished to realize that visiting the fair is still a rather “physical exercise” walking through the halls. After the Covid-19 shock we expected a lot more “online content”. Instead we keep referring to webpages and newletters rather than virtual visits and tours. The preparation of the visit in advance remains a laborious adventure. However, the in-person networking activities in the industry are largely advanced by ease of exchanging virtual business cards and the “FEMWORX” activities.

This year’s Sputnik moment at Hannover is probably most likely related to the pervasive applications of AI across all areas of the industry and along the whole supply chain. Repairing and recycling have become mainstream activities (www.festo.com). Robotics for learning purposes can also be found to get you started with automating boring household tasks (www.igus.eu).

Visiting Hannover in person still involves lengthy road travel or expensive public transport (DB with ICE). Autonomous driving and ride sharing solutions might be a worthwhile topic for next year’s fair. Last year I thought we would meet in the “metaverse fair” rather than in Hannover 2024. Be prepared for another Sputnik moment next year, maybe.

(Image: Consumer’s Rest by Stiletto, Frank Schreiner, 1983)

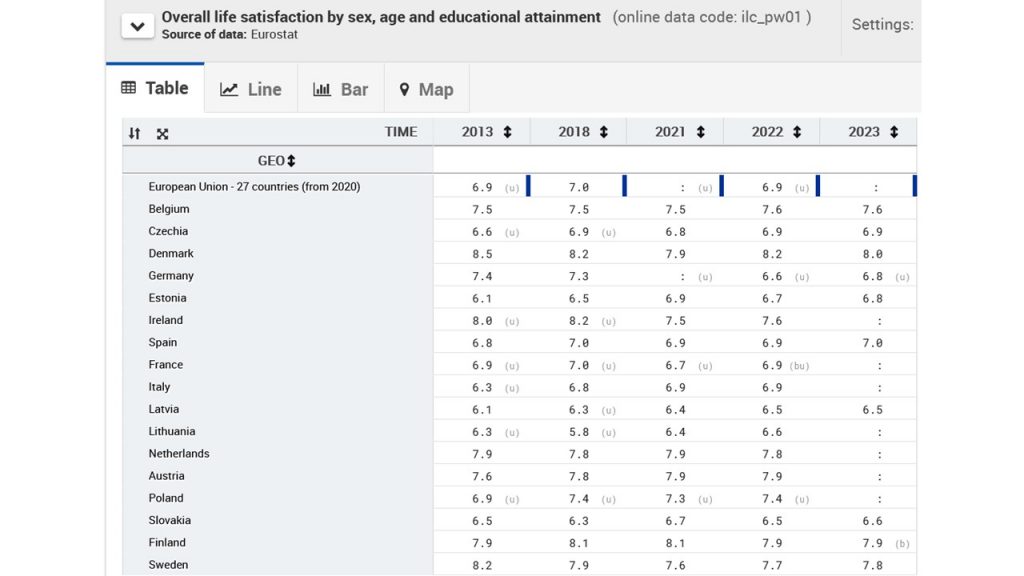

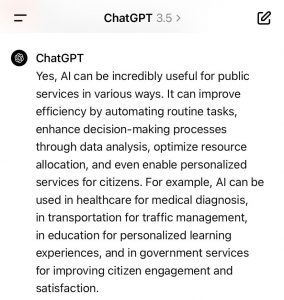

The AI ChatGPT is advocating AI for the PS for mainly 4 reasons: (1) efficiency purposes; (2) personalisation of services; (3) citizen engagement; (4) citizen satisfaction. (See image below). The perspective of employees of the public services is not really part of the answer by ChatGPT. This is a more ambiguous part of the answer and would probably need more space and additional explicit prompts to solicit an explicit answer on the issue. With all the know issues of concern of AI like gender bias or biased data as input, the introduction of AI in public services has to be accompanied by a thorough monitoring process. The legal limits to applications of AI are more severe in public services as the production of official documents is subject to additional security concerns.

The AI ChatGPT is advocating AI for the PS for mainly 4 reasons: (1) efficiency purposes; (2) personalisation of services; (3) citizen engagement; (4) citizen satisfaction. (See image below). The perspective of employees of the public services is not really part of the answer by ChatGPT. This is a more ambiguous part of the answer and would probably need more space and additional explicit prompts to solicit an explicit answer on the issue. With all the know issues of concern of AI like gender bias or biased data as input, the introduction of AI in public services has to be accompanied by a thorough monitoring process. The legal limits to applications of AI are more severe in public services as the production of official documents is subject to additional security concerns.

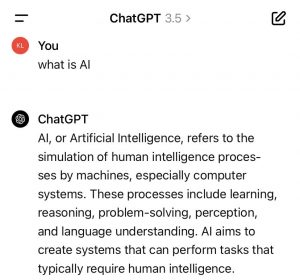

(See image). ChatGPT provides a more careful definition as the “crowd” or networked intelligence of Wikipedia. AI only “refers to the simulation” of HI processes by machines”. Examples of such HI processes include the solving of problems and understanding of language. In doing this AI creates systems and performs tasks that usually or until now required HI. There seems to be a technological openness embedded in the definition of AI by AI that is not bound to legal restrictions of its use. The learning systems approach might or might not allow to respect the restrictions set to the systems by HI. Or, do such systems also learn how to circumvent the restrictions set by HI systems to limit AI systems? For the time being we test the boundaries of such systems in multiple fields of application from autonomous driving systems, video surveillance, marketing tools or public services. Potentials as well as risks will be defined in more detail in this process of technological development. Society has to accompany this process with high priority since fundamental human rights are at issue. Potentials for assistance of humans are equally large. The balance will be crucial.

(See image). ChatGPT provides a more careful definition as the “crowd” or networked intelligence of Wikipedia. AI only “refers to the simulation” of HI processes by machines”. Examples of such HI processes include the solving of problems and understanding of language. In doing this AI creates systems and performs tasks that usually or until now required HI. There seems to be a technological openness embedded in the definition of AI by AI that is not bound to legal restrictions of its use. The learning systems approach might or might not allow to respect the restrictions set to the systems by HI. Or, do such systems also learn how to circumvent the restrictions set by HI systems to limit AI systems? For the time being we test the boundaries of such systems in multiple fields of application from autonomous driving systems, video surveillance, marketing tools or public services. Potentials as well as risks will be defined in more detail in this process of technological development. Society has to accompany this process with high priority since fundamental human rights are at issue. Potentials for assistance of humans are equally large. The balance will be crucial.