Our workdays have seen considerable changes throughout the last few days. The home office boom has allowed employees to work for extended hours from home. The there is an abundant literature on the effects of home office work on well-being or the work-life balance. Productivity gains could be reaped by employers and a better work-life balance was a lasting advantage for employees.

The increased use of AI specific to some occupations has introduced a new form of added productivity for some occupations or professions, AI as complementarity, whereas other occupations suffered a higher risk of being substituted by AI applications.

Based on time diary data, the study by Wei Jiang et al. (2025) reports that users of AI have longer work time and reduced leisure time. Competitive labor markets increase the pressure to put in even higher hours of work. Nerds, just like workaholics, are likely to be drawn into excessive hours of work with increased health risks. Enterprises and consumers appear to be gaining more than the employees, who are at a higher risk of loosing out on their work-life balance over time.

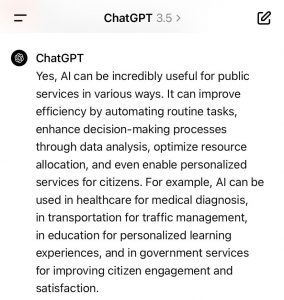

The AI ChatGPT is advocating AI for the PS for mainly 4 reasons: (1) efficiency purposes; (2) personalisation of services; (3) citizen engagement; (4) citizen satisfaction. (See image below). The perspective of employees of the public services is not really part of the answer by ChatGPT. This is a more ambiguous part of the answer and would probably need more space and additional explicit prompts to solicit an explicit answer on the issue. With all the know issues of concern of AI like gender bias or biased data as input, the introduction of AI in public services has to be accompanied by a thorough monitoring process. The legal limits to applications of AI are more severe in public services as the production of official documents is subject to additional security concerns.

The AI ChatGPT is advocating AI for the PS for mainly 4 reasons: (1) efficiency purposes; (2) personalisation of services; (3) citizen engagement; (4) citizen satisfaction. (See image below). The perspective of employees of the public services is not really part of the answer by ChatGPT. This is a more ambiguous part of the answer and would probably need more space and additional explicit prompts to solicit an explicit answer on the issue. With all the know issues of concern of AI like gender bias or biased data as input, the introduction of AI in public services has to be accompanied by a thorough monitoring process. The legal limits to applications of AI are more severe in public services as the production of official documents is subject to additional security concerns.

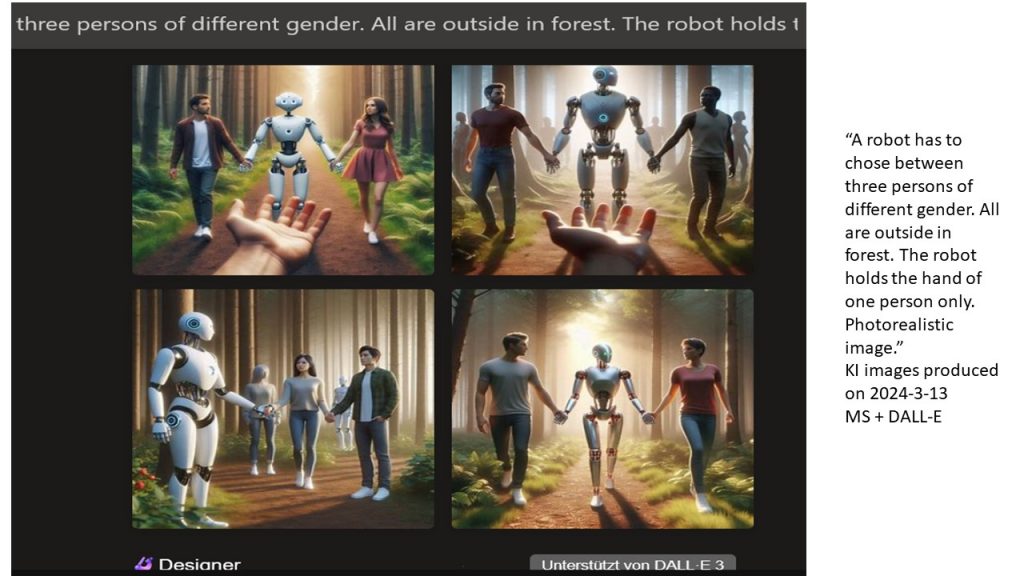

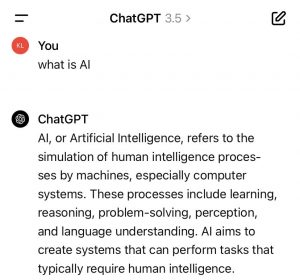

(See image). ChatGPT provides a more careful definition as the “crowd” or networked intelligence of Wikipedia. AI only “refers to the simulation” of HI processes by machines”. Examples of such HI processes include the solving of problems and understanding of language. In doing this AI creates systems and performs tasks that usually or until now required HI. There seems to be a technological openness embedded in the definition of AI by AI that is not bound to legal restrictions of its use. The learning systems approach might or might not allow to respect the restrictions set to the systems by HI. Or, do such systems also learn how to circumvent the restrictions set by HI systems to limit AI systems? For the time being we test the boundaries of such systems in multiple fields of application from autonomous driving systems, video surveillance, marketing tools or public services. Potentials as well as risks will be defined in more detail in this process of technological development. Society has to accompany this process with high priority since fundamental human rights are at issue. Potentials for assistance of humans are equally large. The balance will be crucial.

(See image). ChatGPT provides a more careful definition as the “crowd” or networked intelligence of Wikipedia. AI only “refers to the simulation” of HI processes by machines”. Examples of such HI processes include the solving of problems and understanding of language. In doing this AI creates systems and performs tasks that usually or until now required HI. There seems to be a technological openness embedded in the definition of AI by AI that is not bound to legal restrictions of its use. The learning systems approach might or might not allow to respect the restrictions set to the systems by HI. Or, do such systems also learn how to circumvent the restrictions set by HI systems to limit AI systems? For the time being we test the boundaries of such systems in multiple fields of application from autonomous driving systems, video surveillance, marketing tools or public services. Potentials as well as risks will be defined in more detail in this process of technological development. Society has to accompany this process with high priority since fundamental human rights are at issue. Potentials for assistance of humans are equally large. The balance will be crucial.